Workshop Format

- This will be an online workshop with pre-recorded videos by the invited speakers and selected papers uploaded for viewing.

- A Slack channel (ws11) is open on the ICRA 2020 workspace to post and discuss material over a four week period.

- The workshop culminates with a 3h live Q&A and discussion session with the speakers.

Dates

1st June, 2020 – Slack channel (ws11) opens on the ICRA 2020 workspace

15th June, 2020 – Videos by invited speakers and accepted papers released

1 – 28 June, 2020 – Open discussion on Slack

29th June, 2020 – 12:00pm to 15:00 UTC – Live Q&A with the speakers.

Registration

Participation in this workshop is free of charge and no registration is required. Please contact us at [email protected] if you want to participate and we will send you an invite.

Overview

Dynamic scenes extend and challenge a number of mature research areas in both fields, including simultaneous localisation and mapping (SLAM), structure from motion (SFM), visual odometry (VO), multiple object detection and tracking, etc. Most of the existing solutions to these problems rely heavily on assumptions about the static nature of the environment. This drastically reduces the amount of information that can be obtained in complex environments cluttered with moving objects and may cause the techniques to fail. It also removes important relationships between moving sensors and objects, such as obstacle avoidance in robotics and intelligent transportation.

This workshop seeks to motivate and investigate approaches designed for dynamic environments. It will do this by drawing on expertise in both the robotics and computer vision communities to highlight the challenges of dynamic scenes, the existing capabilities and limitations of sensor modalities and computational techniques, and discussing potential ways forward towards developing algorithms to map, estimate, and understand the dynamic world.

The main topics covered by the workshop are the following:

- Scene flow

- Multi-body SLAM

- Multi-motion visual odometry

- Multiple object tracking (MOT)

- Dynamic scene representation

- Multi-body structure from motion

- Multi-target tracking

- Background / foreground segmentation

- Motion detection

- Motion segmentation

- Sensing the dynamic scene

- Datasets in dynamic environments

We are happy to announce the following invited speakers:

Talks

Please click on the titles or thumbnails to access the videos of the talks.

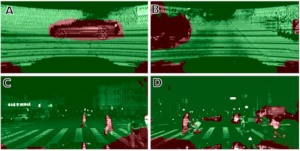

Sensing the World with Event-based Cameras

Davide Migliore – Prophesee

Slides PDF

A Dynamic Object-invariant Space for Dynamic Visual SLAM

Cesar Cadena – ETH Zurich

Beyond SLAM: Actionable Perception of Places, Objects and Humans

Luca Carlone – MIT

Deep Direct Visual SLAM

Daniel Cremers – TU Munich

Slides PDF

Towards Metric Multimotion Estimation

Jonathan Gammell – Oxford Robotics Institute

Understanding Performance Characteristics of Neuromorphic Event-based Vision Sensors

Kynan Eng – CEO of iniVation AG

Slides PDF

Recognition, tracking and reconstruction of multiple moving objects – Live talk during the Q&A session

Lourdes Agapito – University College London

Probabilistic and Machine Learning Approaches for Autonomous Robots and Automated Driving – full video link

Wolfram Burgard – University of Freiburg

Spatial AI in AR/VR

Matia Pizzoli – Facebook AR/VR – Live talk during the Q&A session

Accepted Papers

Cross-modal Transfer Learning for Segmentation of Non-Stationary Objects Using LiDAR Intensity Data

Tomasz Novak, Krzysztof Ćwian, Piotr Skrzypczyński – Poznan University of Technology, Poland

Paper PDF

Agent-Aware State Estimation: Effective Traffic Light Classification for Autonomous Vehicles

Shane Parr*, Ishan Khatri*, Justin Svegliato, Shlomo Zilberstein – University of Massachusetts Amherst, USA

Paper PDF

* Equal contribution

Q&A Session:

Video recording of the session can be found here.

Workshop organizers:

Sponsors:

The workshop targets applications in intelligent transportation, augmented reality and new sensors. Our sponsors are:

Workshop Contact:

Please direct all your queries to