When: Thursday 15th of April, 1pm AEST

Where: This seminar will be presented online, RSVP here.

Speakers: Max Revay, Dr Ruigang Wang

Title: Lipschitz bounded equilibrium networks

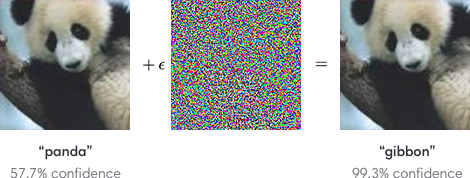

Abstract: This talk presents new parameterizations of equilibrium neural networks (networks defined by implicit equations), which includes many standard deep learning models as a special case. The new parameterization allows Lipschitz bounds to be easily imposed during training via unconstrained optimization methods, e.g. stochastic gradient descent. Lipschitz bounds play an important role in certifying the robustness of neural networks and analyzing their generalization properties. We will also discuss connections to convex optimization and neural ODEs. Image classification experiments show that the Lipschitz bounds are very accurate and improve robustness to adversarial attacks.

Bios:

Max Revay received his Bachelors degree in Mechatronics and Applied Mathematics at the University of Sydney in 2017. Since then, he has been a Ph.D. student at the Australian Center for Field Robotics working on Robust Machine Learning. His research interests include robust machine learning, non-linear control, and networked systems.

Dr Ruigang Wang received his PhD degree from UNSW, Sydney, Australia in 2017. From 2017 to 2018, he was a research associate at the Department of Chemical Engineering, UNSW. Since 2018, he has been a postdoc in the Australian Centre for Field Robotics, The University of Sydney. His research interests include robust machine learning, contraction theory, and model predictive control.