When: Wednesday17th of August, 1pm AEDT

Where: This seminar will be partially presented at the Rose Street Seminar area (J04) and partially online via Zoom, RSVP here.

Speaker: Liyang Liu

Title: Towards Observable Urban Visual SLAM

Abstract:

Visual Simultaneous Localisation and Mapping (V-SLAM) is the subject of estimating camera poses and environment map by drawing inference on captured image data. For straight-line camera motions, which is often the case for self-driving cars in urban environments and fly-to-scene UAV’s, the camera-mounted vehicle moves along a straight line towards the road scene. A large number of visual features cannot be reliably estimated due to their small parallax angles, as a result the classical V-SLAM algorithm encounters instability and the system state is often unobservable.

Further, V-SLAM is a highly non-convex problem. Slightly erroneous initial estimates can easily lead to sub-optimal final solutions and this problem is especially prominent in collinear motion.

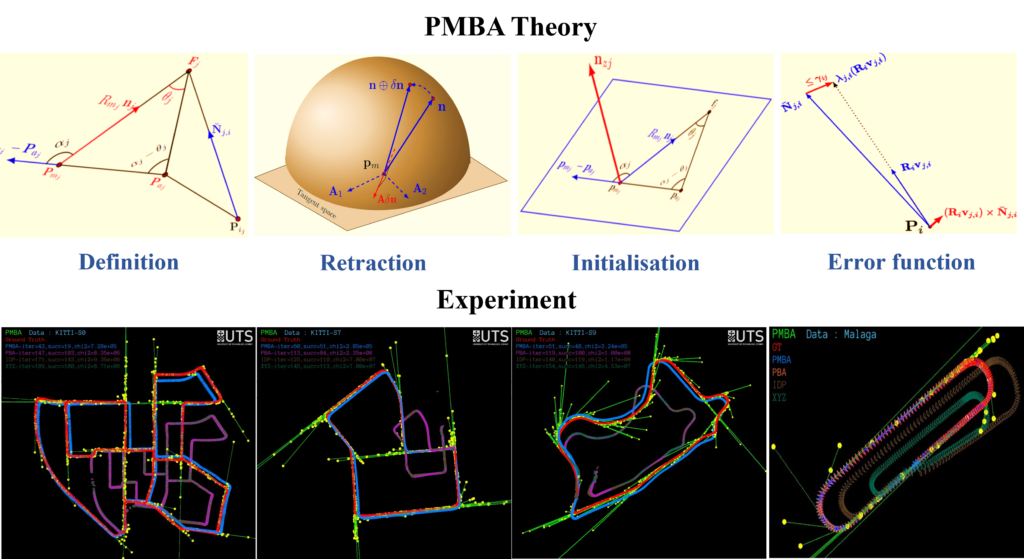

This seminar addresses the issue of Urban SLAM observability associated with monocular cameras. First a novel formulation is presented that addresses the problem elegantly with a full manifold approach (PMBA) – we parameterise map points as direction vectors with parallaxes in the S-2 manifold, and express camera poses in the SE3 Lie Group. The PMBA formulation has a stable optimisation configuration despite the low parallax features such that the associated Hessian matrix is consistently positive definite in every iteration therefore guarantees local state observability with fast convergence rate.

For initialisation, we show how the PMBA formulation can be simplified into a convex form without the conventional iterative estimation process. This convex problem can robustly handle camera collinear motion and noisy measurements and produce near-optimal initial estimates.

A series of quantitative analyses are performed on well-known bench-mark datasets, demonstrating effectiveness of our algorithm in urban environments.

Bio:

Liyang Liu is a research fellow with RTCMA/ACFR. Her work focuses on robotic perception research and real-time robot development. Her expertise is in SLAM, 3D Reconstruction, Computer Vision and Optimisation. She is also experienced in robot collision avoidance and 3D object manipulation by integrating perception systems with ground vehicle navigation and robot arm motion planning. She is currently involved in an unmanned ground vehicle project for mine-site inspection. Prior to joining ACFR, she has worked as both research fellow and research engineer at UTS, on many robotic projects that include Automation in Construction, climbing robot for infrastructure inspection and maintenance and assistive robot for Aged Care. Her PhD work is on Observability and Convergence of Urban Visual SLAM. Prior to academia, she has worked as a research engineer in the private sector, including consumer electronics, marine defence and speech recognition.